Summary

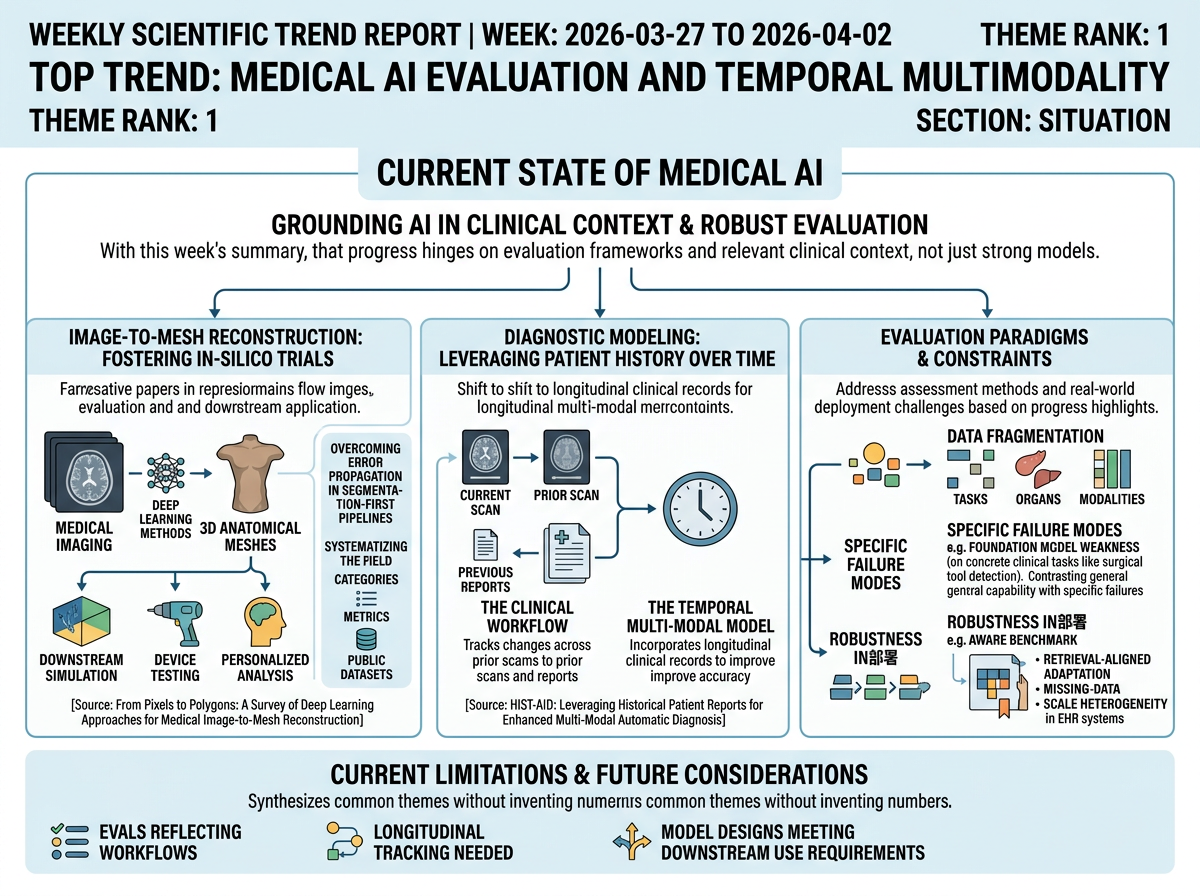

This week's representative papers highlight that medical AI progress hinges on clearer evaluation frameworks and richer clinical context, not only on stronger models. One work systematizes end-to-end image-to-mesh reconstruction through taxonomies, metrics, and curated datasets, while another demonstrates that incorporating longitudinal reports improves diagnostic accuracy over single-timepoint imaging alone.

Situation

The representative papers frame medical AI as moving toward more clinically grounded modeling and assessment. In image-to-mesh reconstruction, accurate 3D anatomical meshes are presented as foundational for computational medicine and in-silico trials, connecting medical imaging to downstream simulation, device testing, and personalized analysis. The survey argues that end-to-end deep learning methods are emerging to overcome error propagation in segmentation-first pipelines, and organizes the field through method categories, evaluation metrics, and publicly available datasets.

In diagnostic modeling, the focus shifts from isolated images to patient history over time. The HIST-AID paper shows that chest X-ray interpretation in practice relies on tracking changes across prior scans and reports, motivating a temporal multi-modal dataset and framework that incorporate longitudinal clinical records. Together, these papers point toward evaluation setups and model designs that better reflect real clinical workflows, temporal progression, and downstream use requirements.

Infographic (English)

Progress

Project Imaging-X: A Survey of 1000+ Open-Access Medical Imaging Datasets for Foundation Model Development <See Details on Fugu-MT>

A large-scale survey cataloging 1,000+ open-access medical imaging datasets exposes fragmentation across tasks, organs, and modalities, and proposes a metadata-driven fusion paradigm. Compared with prior task-level dataset reviews, this work provides a broader map of data availability and highlights structural gaps relevant to foundation-model training and evaluation.

A Comparative Study in Surgical AI: Datasets, Foundation Models, and Barriers to Med-AGI <See Details on Fugu-MT>

A comparative study tests vision-language foundation models on neurosurgical tool detection and finds that even large-scale models fall short on this concrete clinical task. Rather than assuming broad foundation-model capability, this work pinpoints specific failure modes and barriers to medical AGI in surgical settings.

Curia-2: Scaling Self-Supervised Learning for Radiology Foundation Models <See Details on Fugu-MT>

Curia-2 scales self-supervised pretraining for radiology and improves representation quality, outperforming prior radiology foundation models on vision-focused tasks. Compared with earlier pretraining strategies, it better captures radiology-specific features and competes with vision-language models on clinically complex tasks such as detection.

Retrieval-aligned Tabular Foundation Models Enable Robust Clinical Risk Prediction in Electronic Health Records Under Real-world Constraints <See Details on Fugu-MT>

AWARE benchmarks classical, deep, and tabular foundation models for EHR-based clinical risk prediction across varying data scales and real-world constraints. Beyond standard benchmarks, it introduces retrieval-aligned adaptation to improve robustness under the missing-data and scale heterogeneity common in clinical EHR deployment.

Outlook

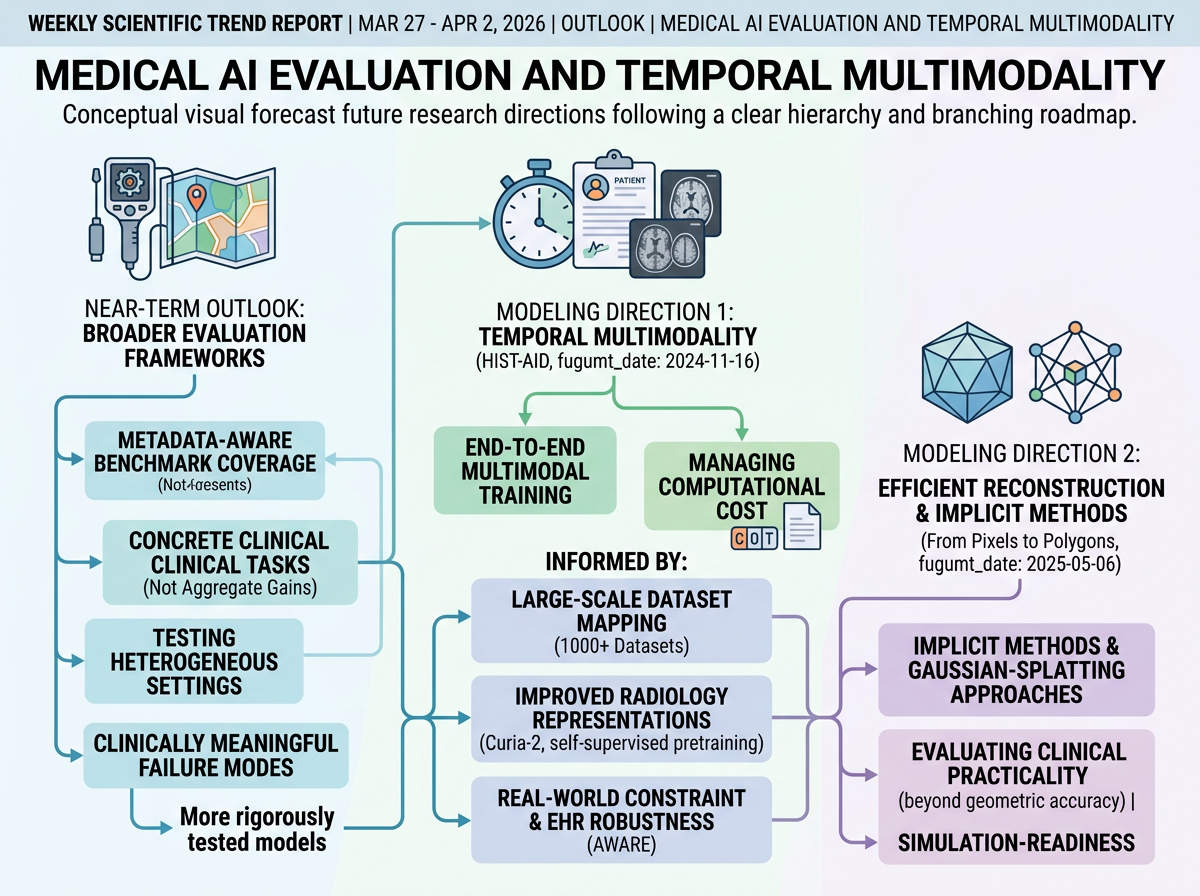

Near-term work will likely focus on broader, metadata-aware benchmark coverage and evaluation on concrete clinical tasks, rather than relying on aggregate performance gains from general foundation models. This week's progress supports that direction: large-scale dataset mapping, radiology-specific pretraining assessment, and targeted surgical and EHR evaluations all point toward testing models across heterogeneous settings, data scales, and clinically meaningful failure modes.

On the modeling side, the representative papers suggest two likely directions grounded in their own future-work sections. For temporal diagnosis, researchers will probably pursue more effective end-to-end multimodal training that makes historical scans useful alongside reports, while managing the computational cost of longer report sections. For reconstruction, implicit methods should remain central, with growing interest in efficient high-fidelity surface representations—such as Gaussian-splatting-based approaches—evaluated not only on geometric accuracy but also on simulation-readiness and clinical practicality.

Infographic (English)

References

- HIST-AID: Leveraging Historical Patient Reports for Enhanced Multi-Modal Automatic Diagnosis - Authors: Haoxu Huang, Cem M. Deniz, Kyunghyun Cho, Sumit Chopra, Divyam Madaan, / <See Details on Fugu-MT> / License: CC-BY-4.0

- From Pixels to Polygons: A Survey of Deep Learning Approaches for Medical Image-to-Mesh Reconstruction - Authors: Fengming Lin, Arezoo Zakeri, Yidan Xue, Michael MacRaild, Haoran Dou, Zherui Zhou, Ziwei Zou, Ali Sarrami-Foroushani, Jinming Duan, Alejandro F. Frangi, / <See Details on Fugu-MT> / License: CC-BY-4.0